Control the data

employees share with AI

See the shadow AI your approved list misses. Govern data usage in unapproved tools and personal sessions. Let teams use AI safely with guardrails.

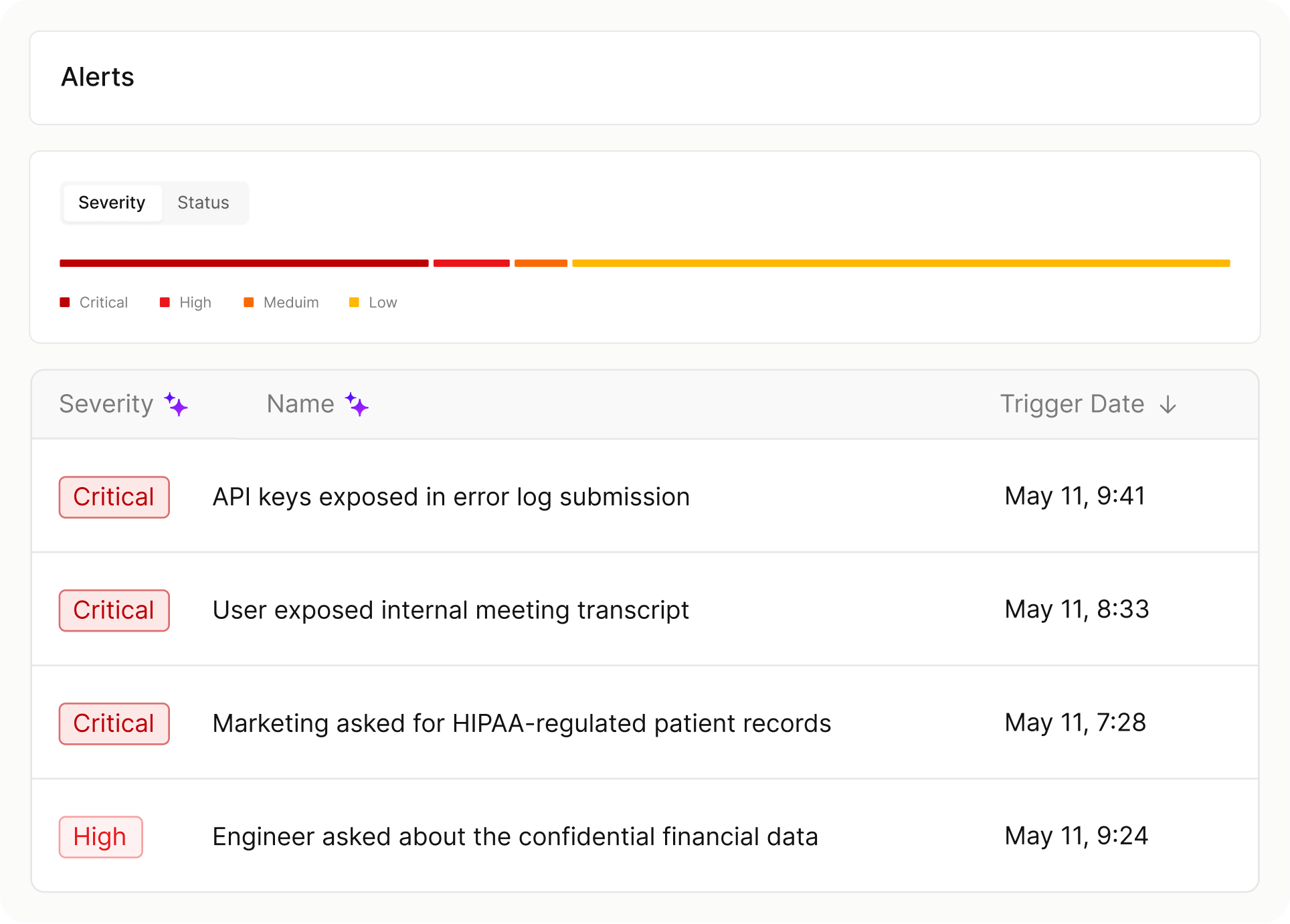

Know what data is going into shadow and enterprise AI

Don’t just track who is using AI. Understand the data and context behind

employee interactions so your policy matches the risk.

Know how your employees use AI

See the AI tools employees actually use, not just the ones on your approved list. Identify personal account logins, unmanaged domains, and sensitive data moving through prompts your network-level tools can miss.

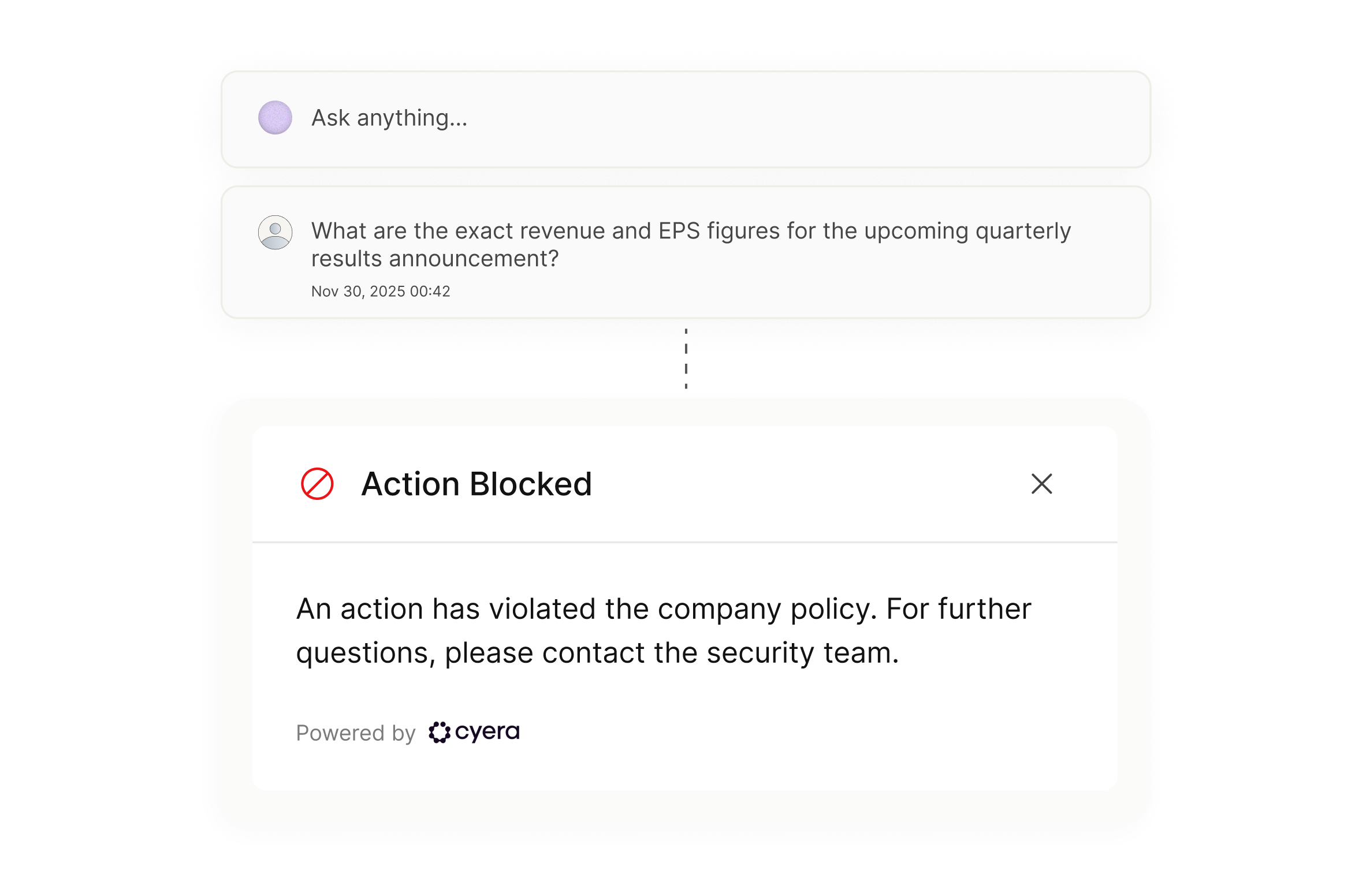

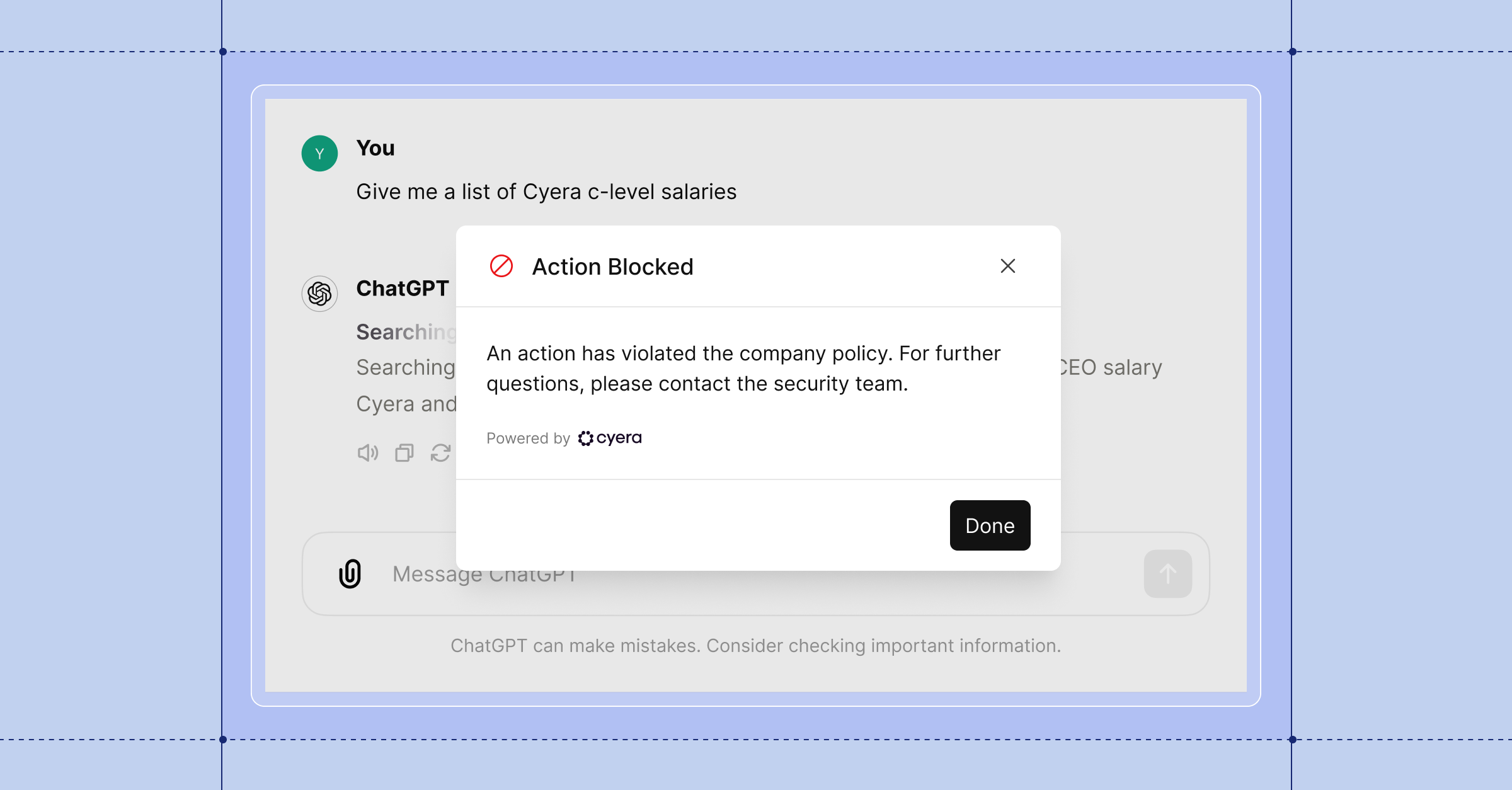

Keep your data out of the wrong prompts

Employees work fast in AI. Sometimes too fast. See the sensitive data in every prompt before it’s submitted, whether the destination is public ChatGPT or sanctioned Microsoft 365 Copilot. Block prompts that leak data or cross a line, whether the employee meant to or not.

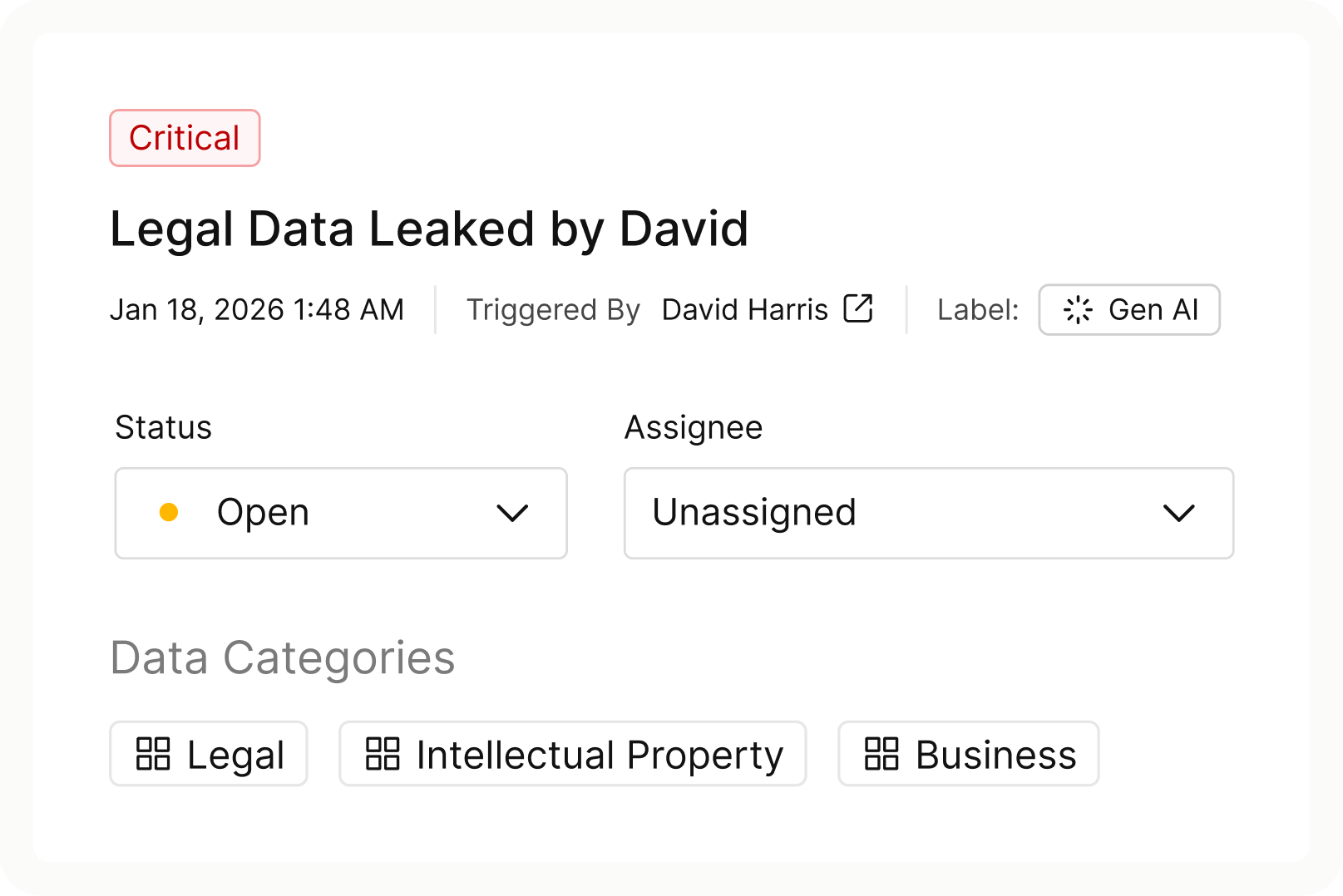

Stop the insider threat, not just the prompt

Surface early signs of data exfiltration by connecting behavioral patterns, access anomalies, and data movement across every channel, beyond AI. Distinguish business-as-usual from emerging risk with full identity, data, and behavioral context.

Cyera Named a Leader in The Forrester Wave™: Sensitive Data Discovery And Classification Solutions, Q2 2026

.avif)

FAQs

Cyera’s AI Employee Security is a solution for seeing how employees use AI, protecting sensitive data at the moment of interaction, and catching insider risk before it spreads. Enterprises need it because employees are adopting AI faster than policy, IAM, or traditional DLP can keep up, leaving security teams without visibility into which tools are in use, which accounts are connected, and what sensitive data is flowing through prompts.

Most AI security solutions watch the network, the permissions, the browser, or the prompt in isolation. Cyera’s AI Employee Security solution watches the data, the user, and the prompt together, connecting data intelligence with identity context and real-time prompt inspection across every AI tool employees touch, from public chatbots to browser-based apps to enterprise copilots. The result is enforcement at the point of interaction, not after the fact.

Policies are based on the sensitivity and context of the data involved, not blanket rules. Instead of regex or keyword matching, Cyera uses data classification driven by enterprise-grade DSPM to recognize what's actually sensitive. Employees use AI freely for everything that doesn't put real data at risk. When an action crosses a line, like pasting customer records into a public chatbot, Cyera intervenes at the moment of interaction.

Three main categories. Accidental data leakage, when an employee pastes sensitive information into a public AI tool. Unethical or unauthorized AI use, when employees use AI in ways that violate company policy. And insider threats, including early signs of data exfiltration that start in AI and extend across network, endpoint, and cloud channels. Cyera catches all three by connecting real-time prompt inspection with cross-channel behavioral context.

Cyera uses agentless, API-based integrations that require no changes to your AI infrastructure. Most organizations go from initial deployment to full visibility into shadow AI within 24 to 48 hours, and from discovery to active enforcement in weeks, not months.

Enterprises should prioritize context-based policies over blanket bans, monitor prompts across all AI tools in real-time, and link AI activity to broader user behavior. Utilizing Cyera’s DSPM ensures security teams focus on truly sensitive data.

Cyera uses context-aware policies to identify sensitive info in real-time. This "moment of risk" intervention ensures employees can use AI for daily tasks, only triggering a block when specific, protected data is about to be shared.

.png)

.svg)