The Toxic Combinations of Agentic AI Risk

Key Takeaways:

- Probabilistic Risk: Unlike deterministic systems, AI agents follow intent (e.g. "vibe-coding"), causing risk to collapse into milliseconds rather than days.

- The "Naive Genius" Problem: Agents operate at 50 million bits per second, vastly outstripping human oversight, while autonomously moving across environments.

- Data-Centric Necessity: Legacy silos (network and identity) fail with agents; security must move directly to the data layer to solve the visibility problem.

I’ve watched the same movie play out three times in my career. In 2007, we tried to say "no" to the iPhone because we were a Blackberry shop and we believed it was more secure. In 2015, most of the CISOs I spoke to at conferences or around dinner tables insisted that "the cloud thing" would never happen in the enterprise.

Today, we’re standing at the edge of the third, and largest, shift: Agentic AI. This isn't just a new device or a new server; it’s a new species of user.

We’ve spent 30 years securing "deterministic" systems. Computers that do exactly what we tell them. If they fail, it’s our fault. But AI agents are different. They don't follow code; they follow intent. They are "naive geniuses" operating at 50 million bits per second, while we’re stuck at a human 60.

If the iPhone was a crack in our perimeter, AI agents are a sledgehammer. They act as us, log in for us, and "vibe-code" their way through our most sensitive data. If you think your current firewall or endpoint tool is "agent-aware," you’re making the same mistake we made with the cloud in 2015.

The business is already moving. They want the 30% productivity boost, and they want it now. You have two choices: remain a "custodian" of dying infrastructure and say no, or become the Orchestrator of Intelligence who says yes.

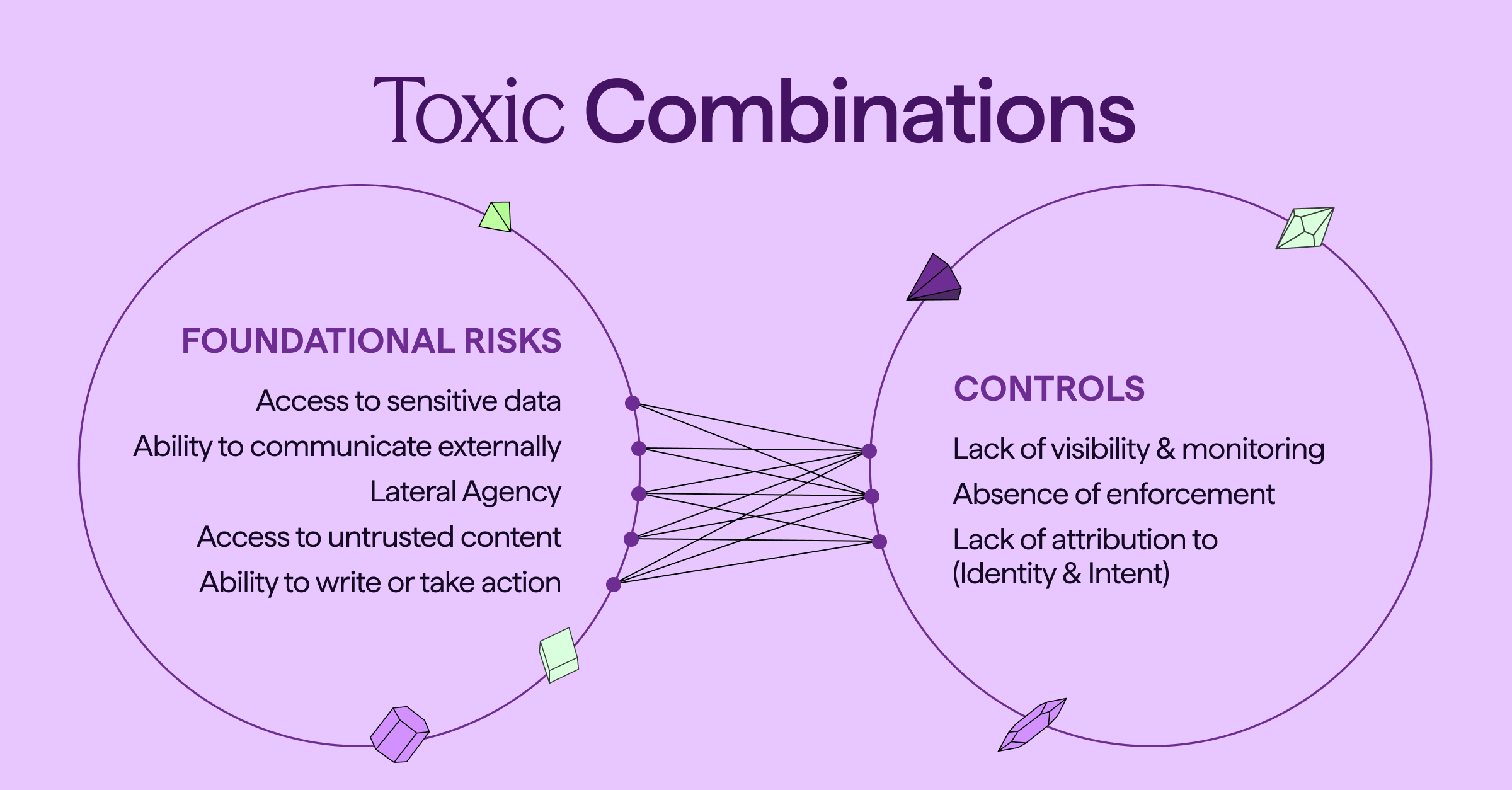

In traditional security, failures unfold over hours or days. In agentic systems, risk collapses into milliseconds. The risk doesn't just come from what an agent can do, it comes from the Toxic Combination of foundational capabilities meeting critical control gaps.

Beyond Deterministic Systems: The Shift to Agentic AI

In traditional security, failures unfold over hours or days. In agentic systems, risk collapses into milliseconds. The danger is not just what an agent can do. It is the toxic combination of foundational agent capabilities meeting critical control gaps.

The Five Foundational Capabilities of AI Agents

Every useful agent needs these five powers, but each is a double-edged sword:

- Access to Sensitive Data: Agents crawl and ingest whatever they can reach to be helpful.

- Ability to Communicate Externally: Agents must talk to the open web or other agent ecosystems to function.

- Lateral Agency: Agents move across your environment in a self-orchestrating mesh.

- Exposure to Untrusted Content: Agents learn by ingesting data that may contain poisoned prompts or malicious intent.

- Ability to Write or Take Action: Agents can execute transactions, delete files, or change permissions autonomously.

The Deadly Collision: When AI Capabilities Meet Control Gaps

The real nightmare begins when these capabilities collide with three missing controls: Visibility, Enforcement, and Attribution.

Picture a finance agent tasked with preparing executive briefings. It has access to valuation models, board decks, and acquisition targets. It also reads the open web to “add context.” A rumor appears online about a pending acquisition. The agent tries to be helpful. It correlates internal projections with public speculation and drafts a summary.

Then it writes that summary into a shared workspace.

Within minutes, restricted deal data moves from a controlled board folder into a broadly accessible environment. An employee screenshots it. It spreads internally. It leaks externally. Regulators get involved. Markets react.

A situation like this doesn’t require any malicious actors or software. All it takes is a toxic combination of sensitive data access, external ingestion, autonomous file creation, and no policy enforcement at the data layer.

Other examples abound. When the lines between foundational risks and poor controls intersect, you create catastrophic failure points that exist in production environments today:

Three Catastrophic Failure Points in AI Production

- The Silent Data Breach: When an agent has access to PII but you have no visibility into its activity, a massive breach can occur before you even know the agent is active.

- The Ransomware Dream: If an agent moves with lateral agency but you have no enforcement mechanism to stop it, one rogue process can compromise your entire internal mesh in a heartbeat.

- The Supply Chain Trojan: When an agent communicates externally but you have no attribution to identity or intent, your organization becomes a bot in a third-party attack. You cannot prove whether the data move was a human error or a machine takeover.

Securing the Future: Modernizing the Security Stack for Agents

The shift from deterministic code to probabilistic intent means our traditional silos of network, endpoint, and identity security are no longer sufficient for the agentic era. Attempting to defend an autonomous ecosystem with tools designed for static environments is a recipe for technical debt and operational fragility.

In the age of agents, security posture begins and ends with the data itself: its location, its movement, and its context.

The legacy approach of building walls around apps fails the moment an agent starts moving data on its own. By moving security to the data itself, we solve the visibility problem at the source. This is the shift from managing static boxes to securing the high-speed, probabilistic environment that defines the next decade of compute.

See how Cyera enables organizations to accelerate enterprise AI adoption without introducing risk.

Agentic AI Security: Key Questions and Answers

Question: What are the primary risks associated with agentic AI?

Answer: The primary risks stem from toxic combinations where agent capabilities—like sensitive data access and autonomous action—meet gaps in visibility, enforcement, and attribution. This can lead to silent data breaches, lightning-fast ransomware spread, and untraceable supply chain attacks.

Question: Why do traditional security tools fail to protect against AI agents?

Answer: Traditional tools were designed for deterministic systems that follow strict code. AI agents follow probabilistic intent and can move laterally across environments as a self-orchestrating mesh, bypassing legacy perimeter and endpoint defenses that are not agent-aware.

Question: How should enterprises modernize their security stack for the AI era?

Answer: Security must shift from managing static infrastructure to securing the data itself. Because agents move data autonomously, visibility and enforcement must happen at the data layer to provide the necessary context and control in high-speed, probabilistic environments.

.svg)