The End of Volume-Based Severity: Rebuilding Risk Assessment with AI

Sensitive data discovery has improved significantly, but is yet to be an solved issue. Most teams can find regulated data across cloud storage, SaaS, collaboration tools, logs, and backups. The harder part now is what happens next: turning visibility into risk reduction.

What slows security teams down isn’t detection; it’s deciding quickly and defensibly which findings are real risk and which are noise. As data environments scale and fragment, triage becomes a bottleneck.

In other words, the way we surface severity doesn’t align with how humans understand risk.

Key highlights

- Severity models based primarily on counting sensitive records fail to represent real-world risk because they ignore context such as data origin & lifecycle, access scope, and operational relevance.

- LLM-driven interpretation enables severity and prioritization to be derived from evidence-based context, aligning automated explainability with how experienced practitioners reason about risk.

- Contextual issue severity, generated immediately at discovery time,acts as an AI bot SOC analyst- removes manual triage bottlenecks and enables security teams to act on the riskiest issues with greater speed and confidence.

The unresolved problem of severity

In theory, severity ranking should guide action. In practice, it often obscures it.

Many data security systems rely on strict, rigid severity models built around easily measurable signals: the presence of sensitive data types and the volume of matching records. While consistent, these models are often driven by regulatory requirements (e.g., classifying a single credit card number in plain text as critical) which is useful for reporting, but fail to capture the actual risk and priority for remediation. When models are prone to high volumes of records and classify everything as critical, they dilute true urgency and are frequently misaligned with what resource-constrained security teams actually need to do next, as they don't capture the relations between different factors and context.

A count of sensitive records does not convey whether the data is production or non-production, dynamically identify toxic combinations, understand what passwords are used for, and whether access is limited to a narrow operational role, aligned with business needs, or granted broadly across engineering, QA, infrastructure, or external collaborators. Critically, it does not describe risk.

As a result, security teams are routinely directed toward large, high-volume issues while smaller but far more consequential exposures are deprioritized. The system behaves consistently, but not intelligently.

This is not a tuning failure. It is a conceptual one.

Risk is contextual by nature

When experienced practitioners assess data exposure manually, they do not start with volume. They start with interpretation.

In classical risk models, risk is typically defined as a function of impact and likelihood. Impact reflects the magnitude of potential harm- financial, regulatory, operational, or strategic. Likelihood reflects the probability that a given exposure will be exploited or will lead to material consequence.

Both dimensions are inherently contextual.

They examine what the data represents, why it exists, how it is used, and who can reach it. They evaluate whether exposure would plausibly lead to financial loss, regulatory consequences, operational disruption, or strategic harm. Those judgments depend on context, not a single metric.

Historically, this type of reasoning did not scale. Encoding it required brittle rule sets or extensive human review. As a result, most systems stopped at detection and classification, leaving interpretation to overburdened teams.

Recent advances in large language models change this constraint. For the first time, it’s possible to synthesize semantic signals across content, structure, access, and lifecycle in a way that approximates expert reasoning - without manual triage.

This shift is the foundation of Cyera’s AI-powered issue severity.

From classification to interpretation

Cyera’s approach to severity does not treat it as a traditional risk score, where each factor contributes independently, but as a conclusion to be derived. Each issue is evaluated using multiple contextual dimensions simultaneously: the nature of the issue, the files involved and their essence, the classifications detected, and the human context, specifically, who the data belongs to. Furthermore, temporal factors such as the data's age and retention status, along with the governing access paths and identities, are crucial for assessing the actual exposure risk.

Large language models enable these signals to be interpreted together rather than in isolation. Severity emerges from how these factors interact, not from any single indicator.

From volume-based ranking to context-based prioritization

This difference becomes immediately visible in how issues are ranked.

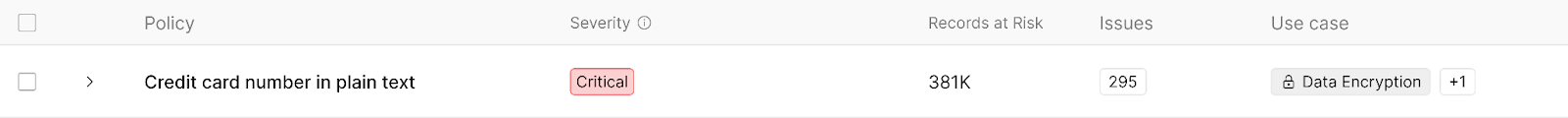

From this

In traditional systems, issues often surfaced as long lists dominated by volume. A single issue category- such as “credit card number in plain text”- may appear critical purely because it contains hundreds or thousands of matches, without any explanation of what those matches represent.

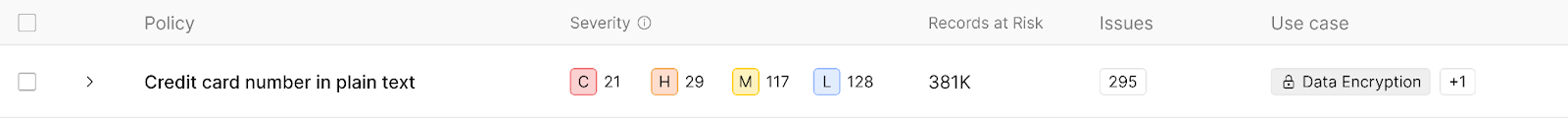

To this

With Cyera’s AI-powered issue severity ranking changes. Issues rise or fall based on contextual risk, not only raw counts. Each issue is accompanied by a clear, evidence-based explanation of why it matters.

From counts to explanations

Consider a common example.

In many systems, an issue might be summarized as:

“513 credit card numbers in plain text”

That number alone offers no guidance. It does not indicate whether the data is operational, historical, or synthetic. It does not explain exposure. It does not support a remediation decision.

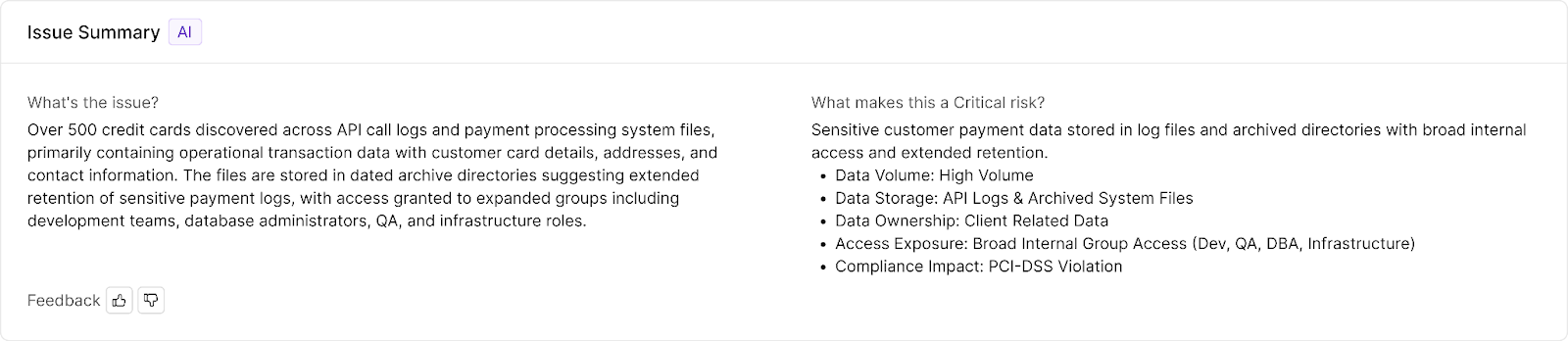

With Cyera’s AI-powered issue severity, the same finding is presented as:

Over 500 credit cards were discovered across API call logs and payment processing system files, primarily containing operational transaction data with customer card details, addresses, and contact information. The files are stored in dated archive directories, suggesting extended retention of sensitive payment logs, with access granted to expanded groups including development teams, database administrators, QA, and infrastructure roles.

This explanation is not decorative. It encodes the reasoning behind severity. It describes where the data came from, how it is used, why it is accessible, and why it represents risk now. Instead of investigating just to understand, the team can move straight to remediation decisions.

When ranking changes, behavior follows

The impact of contextual severity becomes most visible when examining issues that appear structurally similar but carry fundamentally different risk profiles.

In volume-based systems, issues rise in priority because they contain many sensitive values or match predefined policy conditions. The ranking logic is straightforward: more records imply greater risk, and any policy violation is treated as inherently severe. A finding such as “credit card number in plain text” may dominate a dashboard purely due to scale, even if the underlying data is stale, archived, or accessible only within a narrow operational boundary.

Contextual prioritization alters this dynamic by shifting the unit of analysis from the policy violation to the risk scenario.

A policy violation describes a rule that has been broken. A risk scenario describes a plausible path to impact.

The distinction is not semantic; it is structural.

Consider file sharing with a personal email address. Two events may appear identical from a policy perspective:

- An employee shares a document containing payroll information with their own personal email address.

- An employee shares a confidential client proposal or internal strategic document with the same personal email address.

Both involve external sharing. Both may contain sensitive information. Both may trigger the same policy control.

Under a rule-based model, they are equivalent violations.

Under a contextual model, they are different risk scenarios.

In the first case, the data may represent the employee’s own compensation record. The exposure may be undesirable from a governance standpoint, but the likely impact is limited. In the second case, the document may contain proprietary pricing models, contractual obligations, or strategic planning materials. The exposure creates a credible path to competitive harm or contractual liability.

The policy event is the same. The impact profile is not.

This distinction extends beyond external sharing. It is inherent in how exposure operates within modern data environments.

Two datasets may contain the same number of regulated identifiers and trigger the same classification controls. Yet their risk profiles can diverge sharply depending on access geometry and operational role. A dataset confined to a single operational function presents a fundamentally different exposure surface than one accessible across engineering, QA, infrastructure, and external collaborators- even if both contain identical sensitive signals.

Traditional severity models flatten these differences by evaluating compliance states rather than consequence pathways. They answer whether a rule has been violated, but not whether a credible path to material impact exists.

Contextual severity evaluates the latter.

Instead of ranking issues by volume or static policy conditions, it assesses the structure of exposure: whether sensitive data is embedded in live operational workflows, retained beyond business necessity, accessible beyond functional need, or shared in a way that alters the likelihood and scale of downstream harm.

This reframing shifts the unit of analysis from data artifact to risk configuration.

For example, a finding summarized as “513 credit cards” conveys only quantity. A contextual explanation distinguishes whether those cards reside in active payment processing outputs, archival log repositories, synthetic testing environments, or publicly accessible workflows. It captures whether access is tightly governed or broadly distributed, and whether retention patterns amplify exposure unnecessarily.

The distinction determines the nature of response. Some issues require urgent containment. Others require architectural correction. Still others represent administrative hygiene.

When ranking reflects exposure configuration rather than rule violation, remediation strategy becomes proportional to risk. Security effort concentrates on issues that present credible organizational consequences, rather than on those that merely satisfy numeric thresholds.

Immediacy as a design principle

A critical characteristic of Cyera’s severity model is that interpretation occurs at the time of discovery. Contextual explanations are generated immediately when the issue is discovered, not after a secondary investigation phase.

This matters operationally. Without built-in interpretation, security teams must spend significant time validating findings before they can begin remediation decisions. Contextual severity removes this friction by delivering actionable understanding alongside detection.

The result is not the automation of judgment but its preservation. Human expertise is applied to decisions that require it, rather than to reconstructing the basic context.

Beyond regulated data

Contextual severity also exposes a limitation of compliance-driven prioritization. Some of the most consequential data exposures do not center on regulated identifiers at all.

Files containing executive compensation details, internal strategic plans, proprietary technical documentation, or sensitive legal correspondence often appear in environments with overly broad access or public sharing configurations. These files may not trigger the highest alerts in classification-centric systems, yet their exposure can carry disproportionate organizational risk.

By interpreting content, access scope, and relevance together, contextual severity surfaces these issues without relying on predefined taxonomies.

Severity as a foundational capability

As enterprise data environments continue to expand and diversify, prioritization can no longer be treated as a secondary feature layered on top of discovery. It is a foundational capability that determines whether visibility leads to action or paralysis.

From a research perspective, Cyera’s contribution is not simply the use of LLMs, but the reframing of severity as an interpretive problem rather than a numerical one. This reframing enables security teams to move from counting findings to understanding risk.

.png)

.svg)