The Data Taxonomy Illusion: Why Security Teams Are Solving the Wrong Problem

.png)

KEY TAKEAWAYS

1. Static classification taxonomies and regex-based customization create a persistent gap between what security tools report and what your business actually needs to protect - requiring endless fine-tuning and full rescans just to reflect a single change.

2. True data taxonomy spans classification, risk, and sensitivity - and every organization defines each of those dimensions differently. A customer-native taxonomy is the foundation for making DSPM operationally meaningful.

3. Unlike a data catalog, a business-native security taxonomy is designed to act - surfacing risk, driving policy, and enabling decisions - not just to inventory what exists.

The Vocabulary Problem

There's a quiet frustration that runs through most data security conversations - one that rarely makes it into vendor presentations or conference keynotes. Security teams spend enormous energy classifying data, building policies around those classifications, and then watching their work fail the moment a real investigation begins.

The failure isn't technical. The tools often work exactly as designed. The problem is that the design is built on a flawed premise: that a platform's definition of "sensitive data" is the same as your organization's. It isn't. And that gap - between how security systems see data and how businesses actually understand it - is one of the most underexamined risks in enterprise security today.

Take PII. It remains one of the most critical data security use cases, and rightly so. But here's the question every organization should be asking: what does PII actually mean in your environment? Does your definition include employee data? If a document contains a full name and a department, does that make it sensitive? What if it's a public-facing org chart? Two organizations can answer these questions completely differently - and both can be right. The platform has no way of knowing which answer is yours.

PII is not a universal definition. What counts as sensitive personal data in your organization depends on context,

policy, and business function - and only your taxonomy can encode that.

Traditional platforms have offered one solution to this problem: regular expressions. Write a regex, define a pattern, tune a classifier. In theory, this gives teams the flexibility to customize. In practice, it gives them an infinite maintenance burden. Regex-based customization handles narrow, structured patterns reasonably well, but it completely fails for the real challenge - the unstructured, context-dependent data that represents the majority of organizational risk. More fundamentally, it requires security teams to express business meaning in a language that was never designed to represent it.

The hidden cost of regex

Every time a regex needs updating, most platforms require a full environment rescan. In a large enterprise with petabytes of data across dozens of cloud environments, that means days - or weeks - of latency before a single taxonomy change takes effect. It makes iteration impractical and responsiveness impossible.

The rescan problem is not a minor inconvenience. It is a structural barrier to building an adaptive security program. If changing how you define a sensitive topic requires re-processing your entire data estate, you will change it as rarely as possible - and your taxonomy will drift further from your business reality over time.

Taxonomy Is More Than Classification

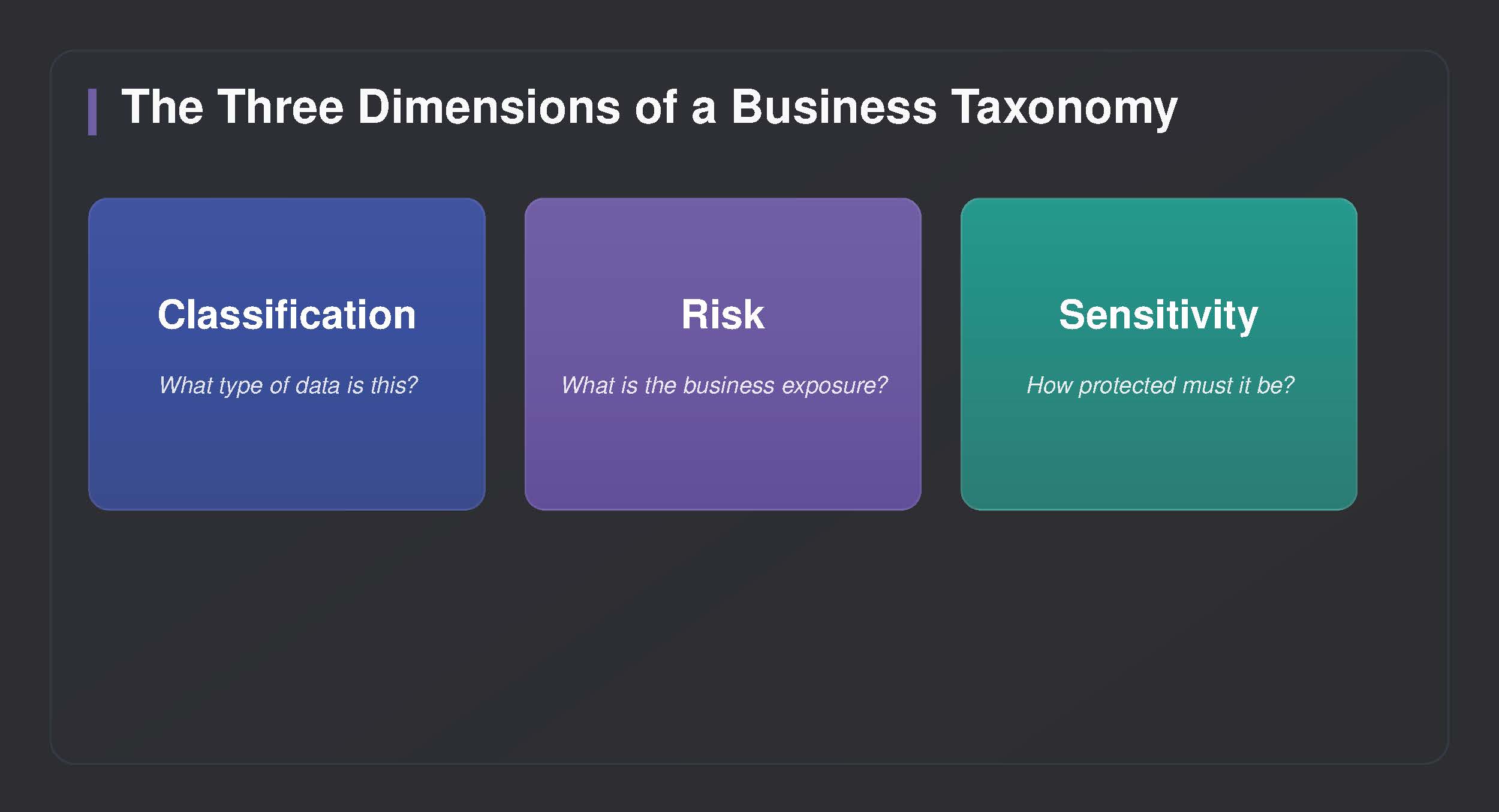

When most security professionals hear "taxonomy," they think about classification - the act of labeling data as a particular type. But a business taxonomy is three things simultaneously: classification, risk, and sensitivity. Each dimension carries equal weight, and customers define all three according to their own organizational context.

Classification answers the question of what a piece of data is. Risk answers the question of what its exposure means to the business. Sensitivity defines what level of protection it warrants. These three dimensions interact. A document that classifies as financial data might carry low risk if it's a historical public filing, and extremely high risk if it's forward-looking earnings guidance ahead of an earnings call. The sensitivity level changes accordingly.

A mature data taxonomy tells you what data is, why it matters, and how hard you should work to protect it - not just which regulatory checkbox it satisfies.

Large organizations often have formal sensitivity frameworks embedded in their governance structures - Confidential, Internal Use, Restricted, Public. A business-native DSPM taxonomy surfaces and operationalizes those frameworks, mapping your existing organizational definitions onto your data estate rather than imposing a new vocabulary on top of it. This is precisely why the taxonomy must be customer-owned: your sensitivity ladder is not the same as your competitor's.

What Organizations Actually Need

Large organizations have their own internal taxonomies - built over years, encoded in how teams name files, structure storage, and how leaders think about risk. A CISO at a consumer goods company doesn't think about risk in terms of "files containing financial identifiers." They think about product formulas, pricing strategy, trade secrets, and regulatory filings.

The disconnect between the platform's taxonomy and the business's taxonomy isn't a configuration problem. It's a translation problem. And in most organizations, that translation happens manually - by experienced analysts who learn, over time, what the system's labels mean in their specific context. This works, imperfectly, until scale breaks it.

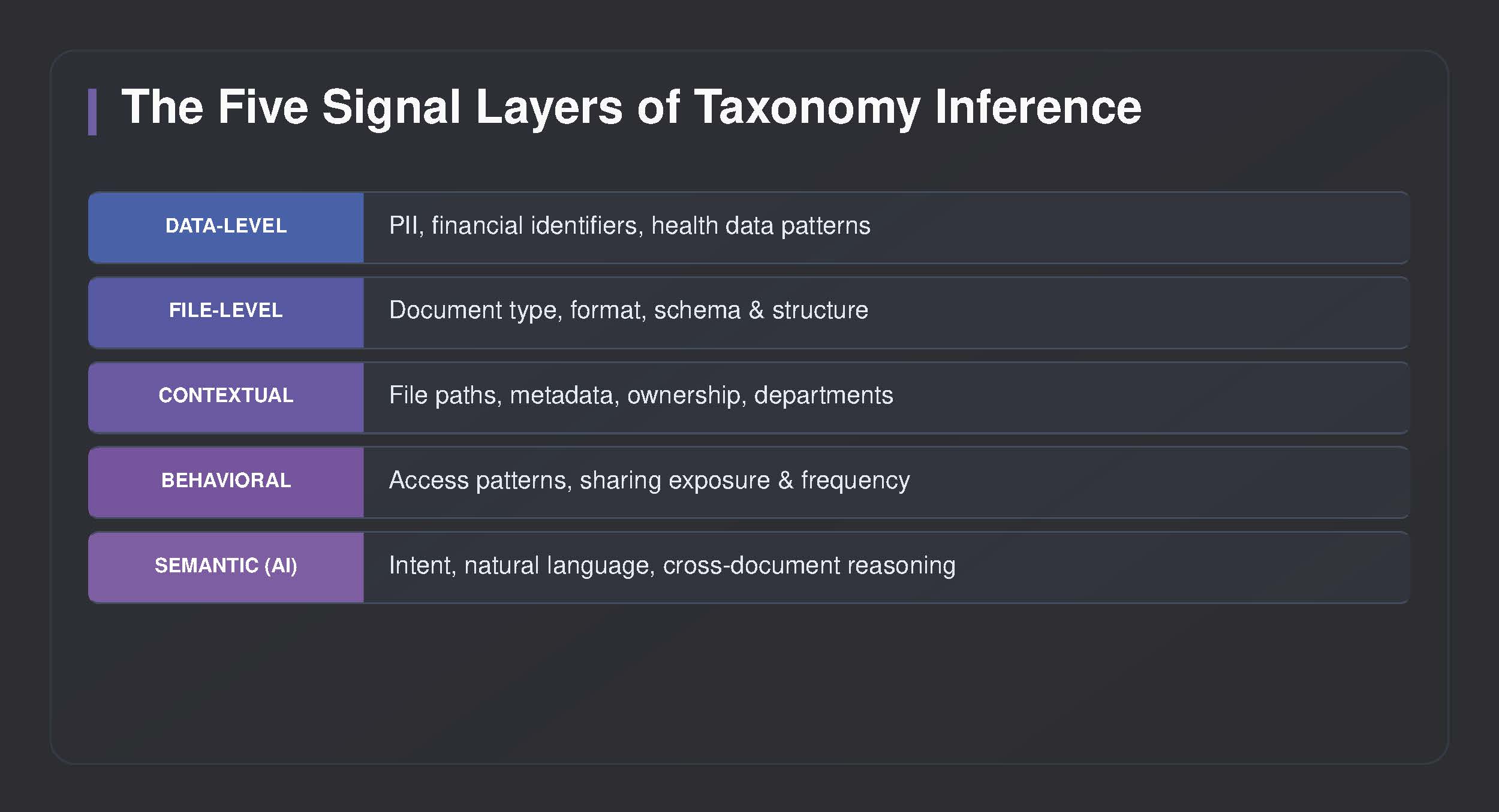

The organizations getting ahead of this problem are asking a different question. Rather than "how do we classify our data better," they're asking "how do we make our data security environment understand us?" The answer lies in combining multiple signal layers - from structured data patterns through to AI-powered semantic understanding - to infer business meaning rather than simply detect data types.

Validated in real deployments across enterprises managing hundreds of distinct data domains, this approach has demonstrated the ability to match business-native labels to platform capabilities with over 80% accuracy at scale - reducing the manual translation burden that has historically consumed security analyst capacity.

Pioneering the Rescan Problem

The technical challenge of making taxonomy changes take effect without full environment rescans is one of the most significant unsolved problems in DSPM. Most platforms today are architected around the assumption that classification is a periodic, batch process - scan, classify, report, repeat. Taxonomy changes break that loop by requiring everything to be re-evaluated.

A different architectural approach is required: one where taxonomy changes propagate incrementally, asynchronously, and continuously across the environment. When a new topic is defined or a sensitivity threshold is updated, the system should apply that change retroactively and prospectively without forcing a full rescan cycle. This is not an incremental improvement over the existing model - it is a rearchitecting of how classification pipelines are built.

The goal is a taxonomy that adapts as your business does - without grinding your environment through another rescan every time you need to reflect a real-world change.

The practical implication for security teams is significant: instead of a taxonomy that represents your organization as it was configured eighteen months ago, you can have one that reflects your organization as it is today. That is the difference between a static compliance artifact and an active security capability.

Is This Just a Data Catalog?

It's a fair question. Data catalogs have existed for years, and they solve a real problem: helping organizations understand what data they have and where it lives. A well-maintained catalog is genuinely valuable. But a business-native DSPM taxonomy is built for a different purpose, and the distinction matters.

A data catalog is fundamentally a documentation tool. It is designed to make data discoverable and understandable - primarily for data engineers, analysts, and data governance teams. It answers the question of inventory. A DSPM taxonomy is a risk operations tool. It is designed to surface exposure, drive policy, and enable response - for security teams making active decisions about what to protect and how.

The two are complementary, not competing. Organizations with mature data catalogs can use them as an input layer into a DSPM taxonomy - their existing metadata, classifications, and lineage information enriches the security context. But the taxonomy layer adds what catalogs are not designed to provide: continuous risk assessment, sensitivity enforcement, and active security operations across a live data environment.

A data catalog tells you what data exists. A business-native DSPM taxonomy tells you what's at risk, at what level, and what to do about it.

The Road Ahead

We are early in this shift. Most organizations are still operating with classification systems that were designed for a simpler data environment and a narrower threat model. The explosion of unstructured data, the expansion of AI-driven workflows, and the increasing sophistication of how data moves across organizations are pressures that static, regex-reliant taxonomies are not equipped to handle.

The direction is clear, even if the journey is ongoing: data security programs that will be effective at scale are ones that understand data the way the business understands it. Not as a collection of sensitive patterns to be flagged, but as a landscape of business meaning - spanning classification, risk, and sensitivity - to be navigated with precision.

Classification will always be part of the picture. But the organizations building durable security programs are the ones who recognize that classification is the beginning of understanding - not the end of it.

Classification is the beginning of understanding - not the end of it.

.avif)

.png)

.png)

.svg)