Introducing Cyera Browser Shield: Prevent Sensitive Data Leakage and Insider Risk in Browser AI Apps

See every AI tool used in the browser. Protect every interaction with data, identity, intent, and organizational context.

Key Takeaways

- Real-time prompt protection: Cyera Browser Shield monitors and evaluates every AI prompt before submission, preventing sensitive data from leaving your organization through public AI tools like ChatGPT, Gemini, and Perplexity.

- Identity-aware governance: The solution distinguishes between corporate and personal AI accounts, providing visibility into who uses which AI tools and applying appropriate policies based on account context and user identity.

- Flexible enforcement options: Organizations can start with monitoring and alerts before implementing blocking, allowing teams to understand AI usage patterns and mature their governance programs gradually without disrupting productivity.

- Unified data protection: Browser Shield integrates with Cyera Omni DLP to provide consistent policy enforcement across all data channels, creating a single investigation workflow for AI-related and traditional data security events.

Employees routinely use public, browser-based AI tools such as ChatGPT, Gemini, and Perplexity as part of their daily work. These tools help summarize content, refine writing, analyze documents, and troubleshoot issues. But prompts often contain sensitive data such as personal identifiable information (PII), financial data, proprietary code, or confidential business context. When submitted to public AI, that content may leave the organization without being checked against data policies. This creates risk from both accidental data leakage and intentional misuse at the point of prompt submission.

At the same time, security teams are trying to govern AI usage with tools that were not designed for this interaction model. They can see access to well-known AI domains, but they often lack visibility into newly accessed AI sites or the actual content being submitted. Network DLP typically does not inspect prompt text itself. Endpoint controls focus on files, not conversational inputs. And enterprise AI governance tends to apply within managed platforms, not across unsanctioned or shadow AI usage. The exposure point that often goes unaddressed is the prompt itself.

Cyera Browser Shield Overview

How Cyera Browser Shield Delivers Data-Aware Protection for Public AI Tools

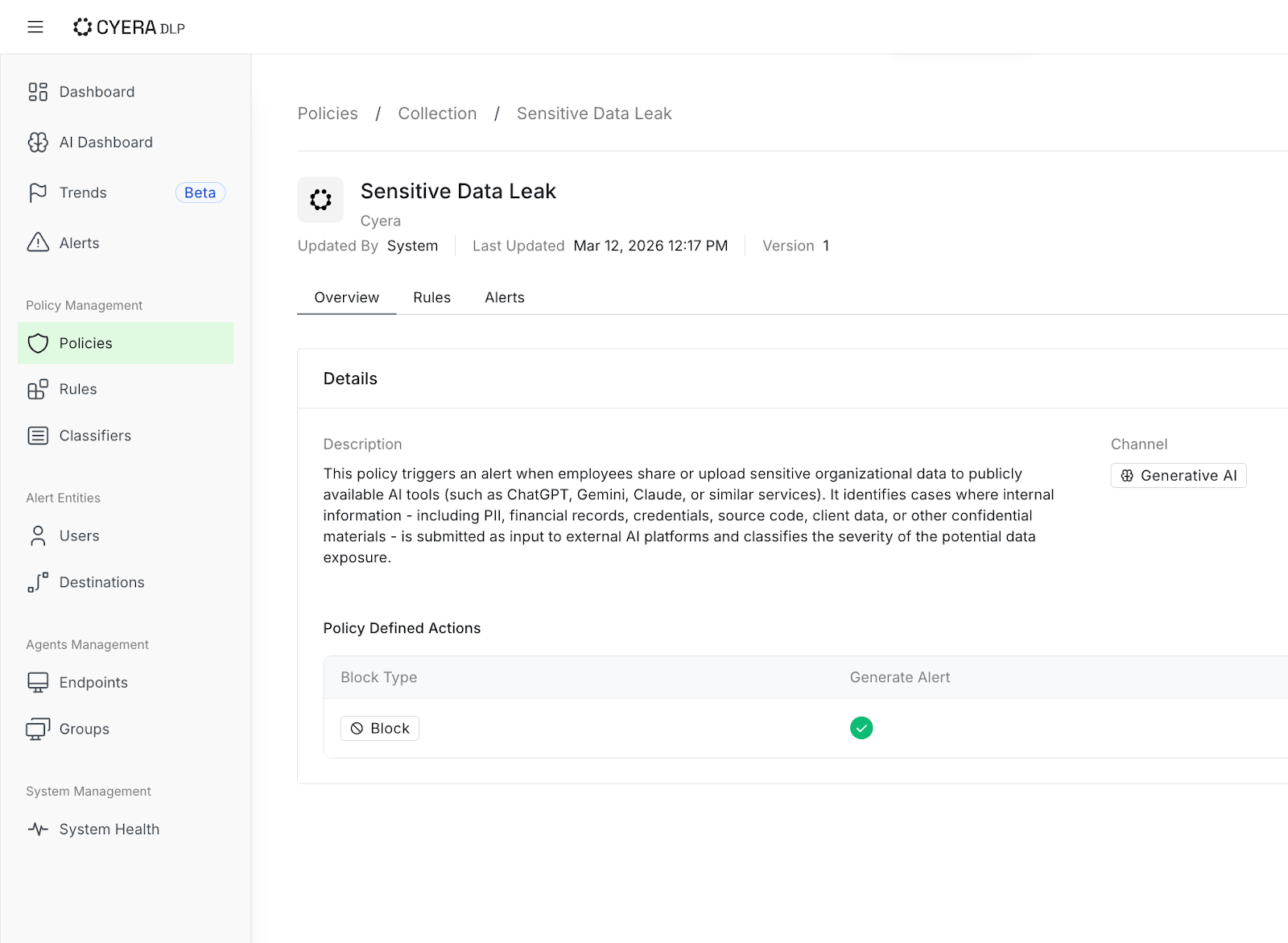

Cyera Browser Shield for AI addresses this gap by allowing security teams to inventory all browser-based, public AI tools in use and attribute them to identities and subscription type, surface policy violations by monitoring prompts in real time, and block risky prompts inline while notifying users. In addition, Browser Shield integrates seamlessly with Cyera Omni DLP, enabling teams to investigate AI-related alerts and policy violations alongside other data events and apply consistent policy logic across environments.

In this post, we’ll walk through how Browser Shield helps security teams:

- Understand who is using what AI, and how

- Prevent sensitive data from leaving your organization

- Detect policy violations, insider risk, and unethical use of AI

- Unify alerts and data controls across AI surfaces

Visibility: AI Tool Discovery

Discovering and Monitoring Public AI Tool Usage Across Your Organization

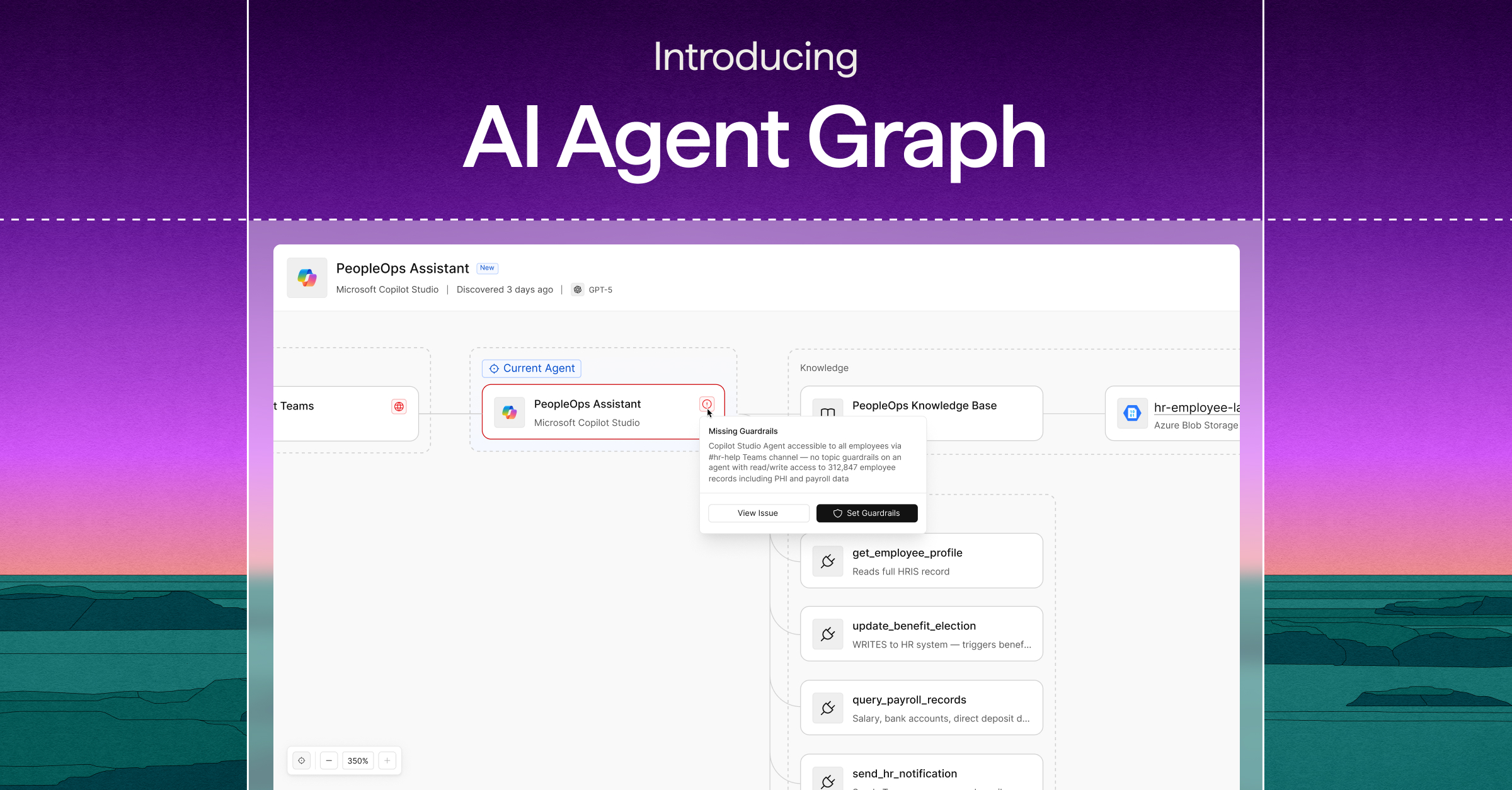

The first step in enabling and securing AI usage in the browser is clarity. Security teams should have answers to questions such as which public AI tools are being accessed from managed browsers? How broadly are they used? Which human and non-human identities are involved? And what accounts are they using to log in - corporate or personal?

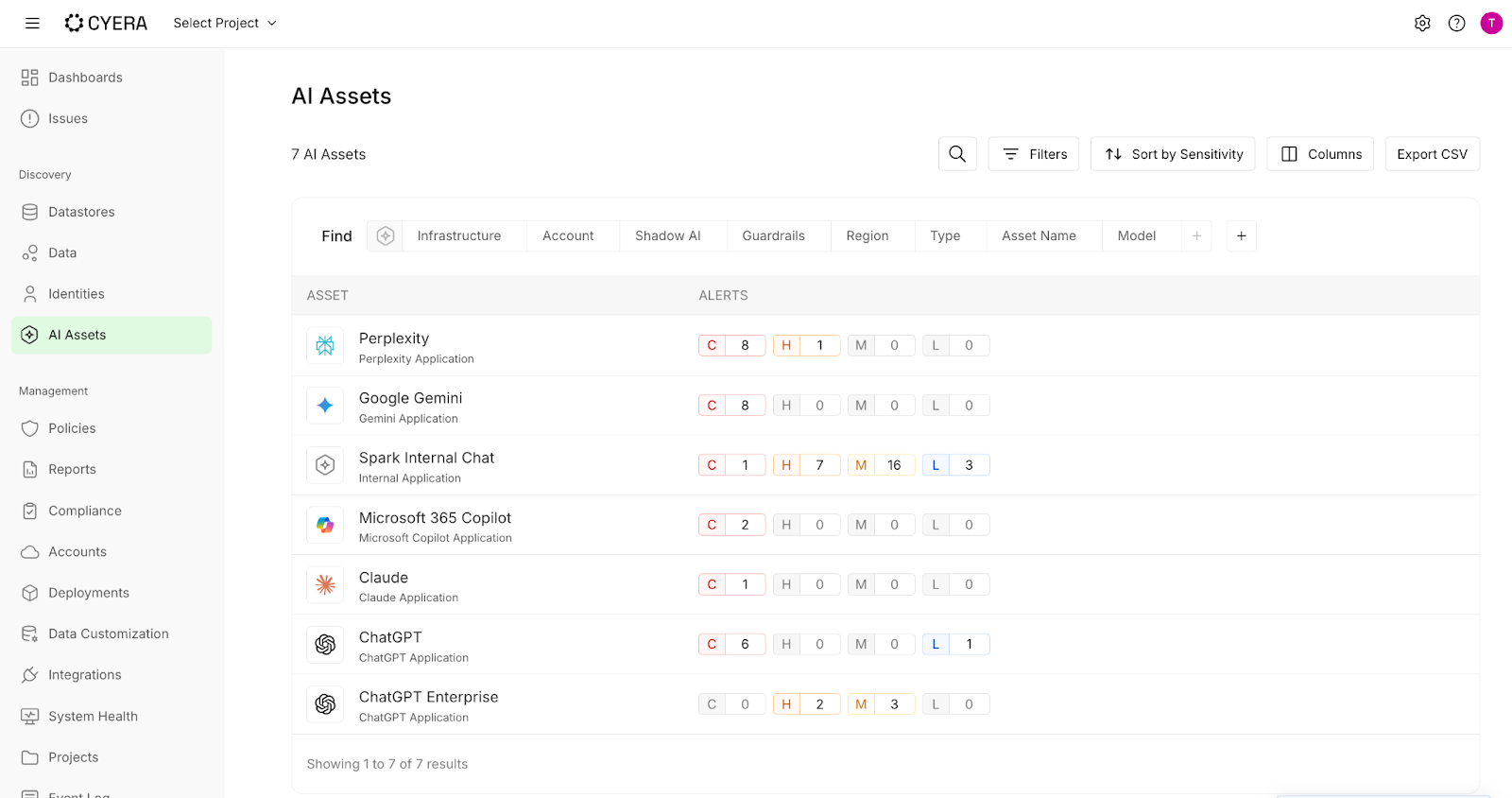

Browser Shield continuously discovers all web-based AI tools employees are using and inventories them in the AI Assets page alongside embedded and homegrown AI apps and agents that Cyera found in your environment. The list view allows you to immediately see how many alerts and at what severity have been generated for each tool, and prioritize your efforts accordingly.

In addition, Cyera enriches public AI usage with identity context to better understand, prioritize, and solve AI security posture risks. Clicking on a tool such as ChatGPT, you can distinguish between corporate and non-corporate account usage and attribute that activity to real identities inside the organization. It shows you not just which tools are being used, but whether employees are using them inside or outside managed enterprise contexts and whether sensitive data leakage or insider risk exists.

Real-Time Data Protection

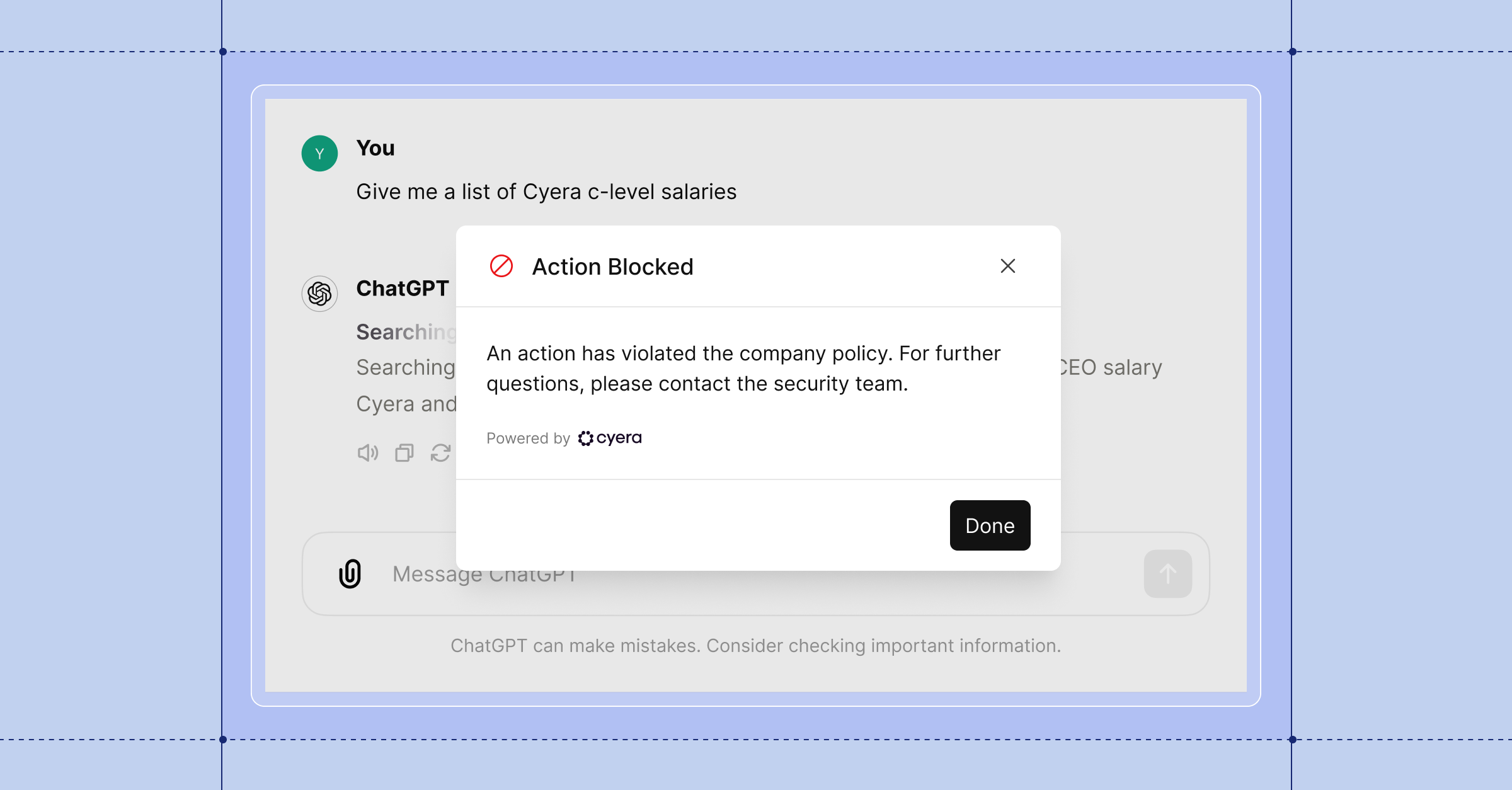

Blocking Sensitive Information Before AI Submission

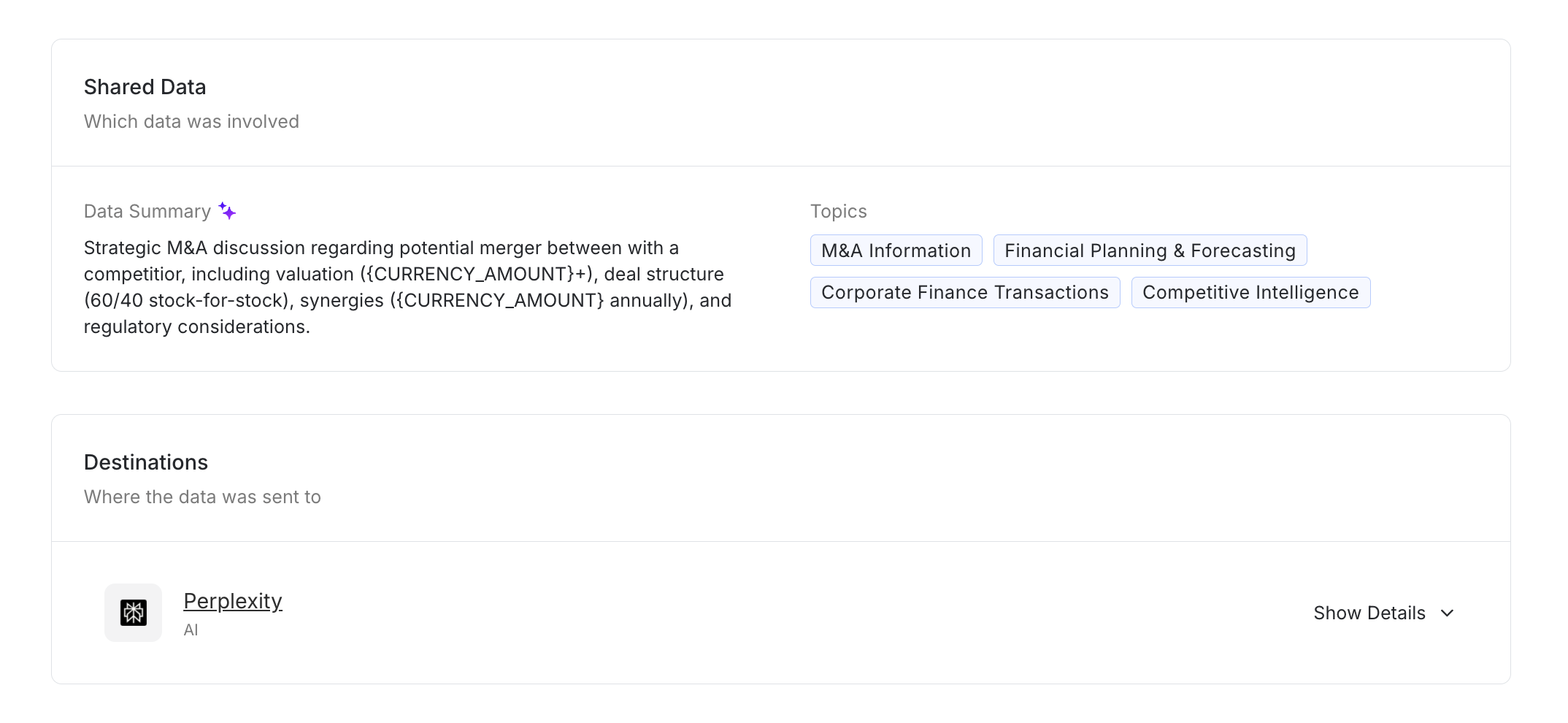

Blocking public AI tools outright can disrupt legitimate workflows. Consider a finance manager pasting projected quarterly revenue figures into an unmanaged Gemini session to refine language. Whether signed in with a personal account or a corporate email used outside an approved tenant, that content may leave the governed environment and, depending on service terms, be retained for model improvement. This increases compliance exposure and the risk of both accidental leakage and intentional misuse.

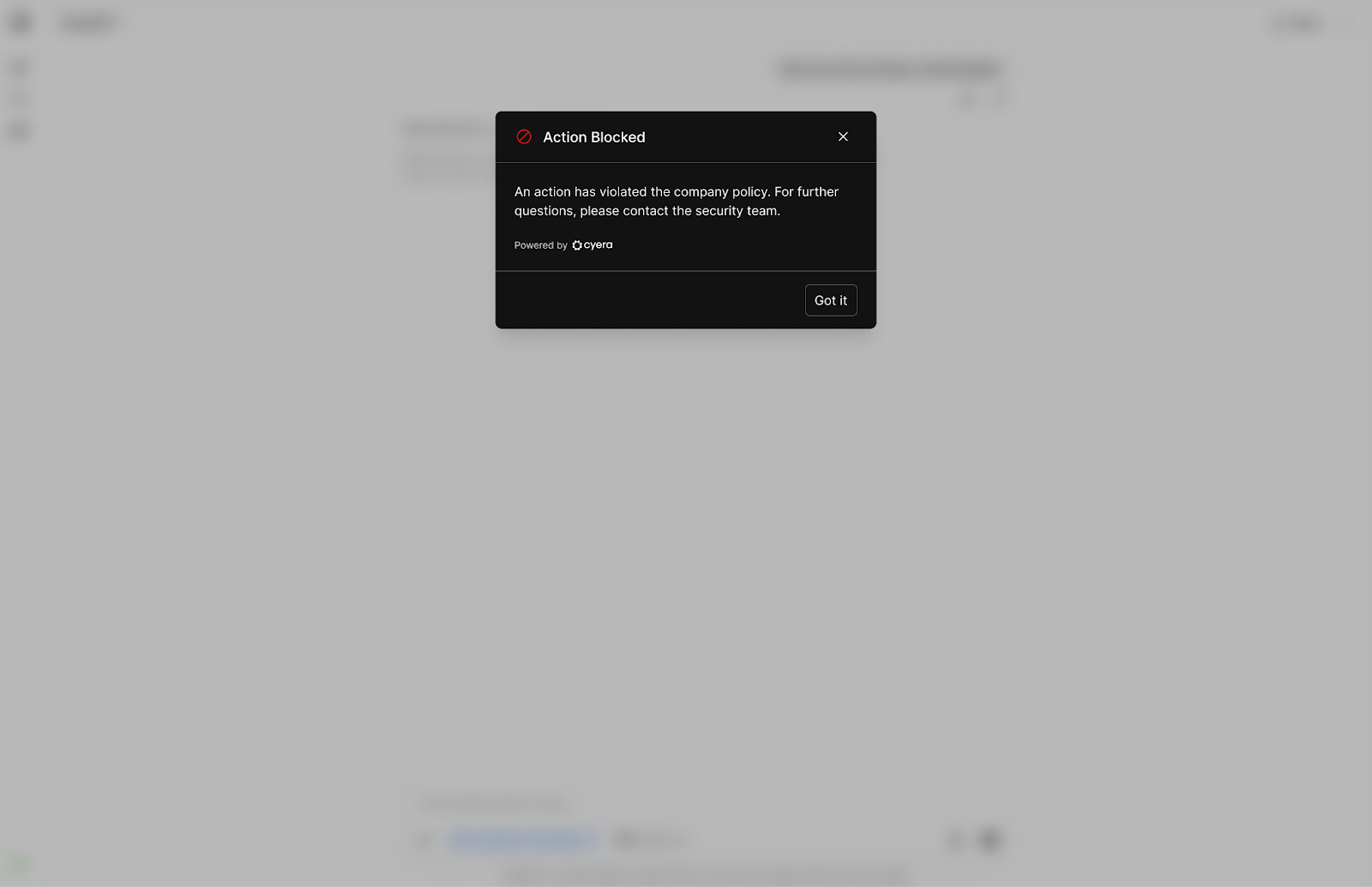

For every prompt, Browser Shield uses content, data classification, identity, and account type to evaluate it against company policies before being submitted to the AI application. This happens in real-time with no latency impact on user experience. In the example above, if an organization defines such financial content as restricted for public AI tools and decides to prevent it from leaving its boundaries through the browser, Cyera will identify it as such, block the submission inline, and notify the user about the policy violation.

In addition to intended and unintended data leakage, Browser Shield also addresses unethical use of AI, such as a Human Resources director trying to automate termination notices without human judgement.

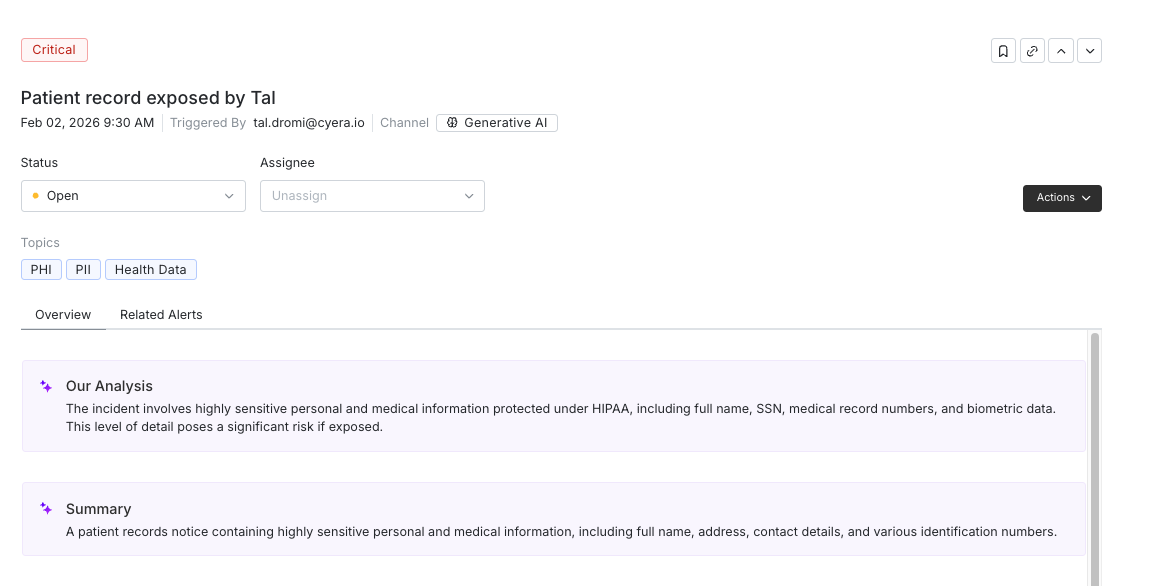

All types of events capture the following information:

- User identity

- AI application context

- Account type (corporate vs. personal)

- Action taken

- Sensitive data class and category

- Prompt transcript

If a question arises later, whether by internal stakeholders or external auditors, there is a clear record of what occurred and under which account context.

Advanced Threat Detection

Identifying Policy Violations and Insider Risk in AI Interactions

Not every policy violation requires an immediate block. Many organizations begin AI governance with visibility. They want to understand usage patterns and risk exposure before introducing stricter controls. In some cases, certain types of content may be allowed while interactions are still monitored and alerted for review.

Browser Shield offers that flexibility. All prompts can be evaluated against policy and logged in real time, without interrupting the user. If input includes internal documentation, non-public architectural notes, or sensitive data that is not restricted from public AI tools under current policy, the interaction can proceed while still generating an alert in Cyera and a subtle notification inside the browser.

Here are a few examples for such scenarios:

- A user pastes internal but non-classified documentation into a public AI tool.

- A corporate account interacts with a public AI service under defined guardrails.

- A prompt includes sensitive terminology but does not meet enforcement thresholds (e.g. a W-2 form of an employee for which Cyera lowers the criticality level).

In these cases, the prompt can be allowed while the event is recorded and surfaced for review, allowing you to:

- Detect patterns of usage over time

- Identify departments or roles interacting with AI at higher frequency

- Refine policies based on observed behavior

- Escalate specific cases when needed

Cyera uses data classification, identity, and account context to generate accurate alerts and adds AI-generated summaries and insights to help you investigate and address them faster.

This approach allows you to mature your AI governance program gradually. You can begin with visibility and monitoring, introduce enforcement where risk is highest, and adjust thresholds as usage patterns become clearer.

It also avoids a common failure mode of early AI governance programs: introducing strict blocks that hinder AI adoption before understanding how AI is actually being used.

Centralized AI Governance: Integrating Browser Protection with Enterprise DLP Systems

Public AI tools and enterprise AI platforms introduce different data risks and require different enforcement points. Browser Shield governs browser-based AI usage, where personal accounts and unmanaged tools can bypass traditional controls.

Cyera Omni DLP unifies enforcement across environments. It provides a centralized investigation and policy layer across all DLP channels, reducing false positives and automatically tuning policies using AI.

Browser Shield events flow directly into Omni DLP, allowing teams to investigate AI activity alongside other data movement events and enforce consistent policies across public and enterprise AI environments.

Closing the prompt-level gap with data and identity context

Public AI tools have created a new data exposure surface. Sensitive information can now leave the organization through a simple browser prompt, sometimes through personal accounts and without being evaluated by existing controls.

GenAI browser security is not about restricting AI. It is about making sure the same data policies that govern files, emails, and databases also apply to AI interactions.

Cyera Browser Shield for AI brings prompt-level visibility and enforcement to approved and unsanctioned AI usage in the browser. By combining enterprise data classification, identity and account context, and real-time prompt analysis, it allows organizations to monitor, alert on, or prevent high-risk AI interactions according to policy, reducing sensitive data leakage and insider risk while allowing teams to continue using AI productively.

Browser Shield is currently in early availability and supports all Chromium browsers including Google Chrome, Microsoft Edge, Brave, and more on Windows and macOS. It can be deployed through existing MDM tools such as Microsoft Intune, Jamf Pro, and Google Admin Console.

To see how it works in practice, speak with your Cyera team or request a demo today.

Cyera Browser Shield FAQs

Q.) What is GenAI browser security?A.) GenAI browser security focuses on governing how employees interact with AI tools directly in the browser. This includes tool discovery and monitoring and controlling prompts submitted to public AI services to prevent sensitive data exposure and ensure AI usage follows enterprise data policies.

Q.) Why can’t traditional DLP tools detect AI prompt submissions?

A.) Most traditional DLP tools monitor files, email, or network traffic patterns. AI prompts are submitted directly through browser interfaces, making the prompt content itself difficult to inspect. This creates a visibility gap at the point where sensitive data may be entered.

Q.) How does Cyera Browser Shield protect data in public AI tools?

A.) Cyera Browser Shield evaluates prompts in real time before they are submitted to public AI tools without disruption to user experience. It uses enterprise data classification, identity and connected account context, and prompt intent analysis to determine whether to allow, alert on, or block a prompt based on organizational policy.

Q.) Can Cyera Browser Shield distinguish between corporate and personal AI accounts?

A.) Yes. Browser Shield identifies whether a user is interacting with AI tools through a corporate-managed account or a personal session. This allows organizations to apply different policies depending on the account context and governance requirements. This applies to both managed and unsanctioned browser-based AI tools alike as leakage and insider risks could arise in both, even when users use their corporate accounts.

Q.) Does Cyera Browser Shield replace enterprise AI governance tools?

A.) No. Browser Shield complements enterprise AI governance and existing DLP controls. It focuses on governing prompts submitted through public browser-based AI tools and integrates with Cyera Omni DLP to apply consistent policies and investigation workflows across data channels.

.svg)