You Can't Protect What You Can't See: Why AI Visibility Is the New Security Imperative

Key Takeaways

- Shadow AI poses major security risks: Employees use unauthorized AI tools that bypass security reviews, creating data exposure vulnerabilities

- AI visibility requires mapping three critical elements: Which tools are in use, who is using them, and what data they access

- Data security forms the foundation of AI security: Every AI model is only as secure as the sensitive data it processes

- AI Guardian provides actionable intelligence: Beyond simple discovery, it delivers business insights that enable secure AI adoption

- Proactive AI security creates competitive advantage: Organizations that secure AI early will lead innovation while others face compliance gaps

Security leaders have spent decades building controls around a simple premise: know your environment, secure your environment. Every tool in the stack assumes you have a map.

In the last 18 months, AI has moved faster than any technology adoption cycle security teams have had to manage. Employees aren’t announcing when they paste customer data into ChatGPT. Developers aren’t filing tickets when they wire a new LLM into a production pipeline. Vendors are sending notifications when they quietly enable Copilot across your SaaS stack.

This gap in visibility doesn’t represent a gap in security processes, it’s more indicative of a gap in the architecture. And it’s a gap traditional security tools were never designed to close. These tools were built for a world where corporate data stayed inside systems teams could control. As AI continues to break that control, the question isn’t whether sensitive data is flowing into AI systems, the question is whether you’re going to find out proactively, or after the fact.

What Makes AI Visibility the #1 Security Challenge for Enterprises?

Security teams are left with two options: block AI across the board, or look the other way. Both options ultimately lead to Shadow AI spreading throughout your organization, and sensitive data flowing into AI tools completely unmonitored.

The real challenge isn't picking between access and control. It's getting enough visibility to allow both. Before you can move forward, you need answers to a few fundamental questions:

- What AI tools are in use?

Marketing might be running prompts through ChatGPT, Engineering could be building on Bedrock and Claude, while Sales may be leaning on Copilot embedded in their CRM. Every team adopts AI differently, and most security teams have no unified view across public, embedded, and homegrown models.

- Who is using them?

A developer testing a new LLM integration looks very different from a finance analyst pasting quarterly projections into a chatbot. Without user-level attribution, security teams can't assess risk or enforce policy by role, department, or business unit.

- What data are they touching?

AI tools don't just consume data. They ingest it, process it, and in some cases, store it. That includes customer PII, source code, financial forecasts, and regulated health records. If you can't map which data flows into which AI tool, you have no way of knowing whether sensitive information is being exposed.

- Are these tools approved or shadow AI?

Sanctioned tools go through procurement, legal, and security review. Shadow AI skips all of it. Employees adopt unsanctioned tools because they want to move faster, but without visibility, security teams can't tell the difference between an approved Copilot deployment and an unauthorized ChatGPT plugin handling sensitive data.

AI Guardian: Discover Any AI Tool. Defend Your Data.

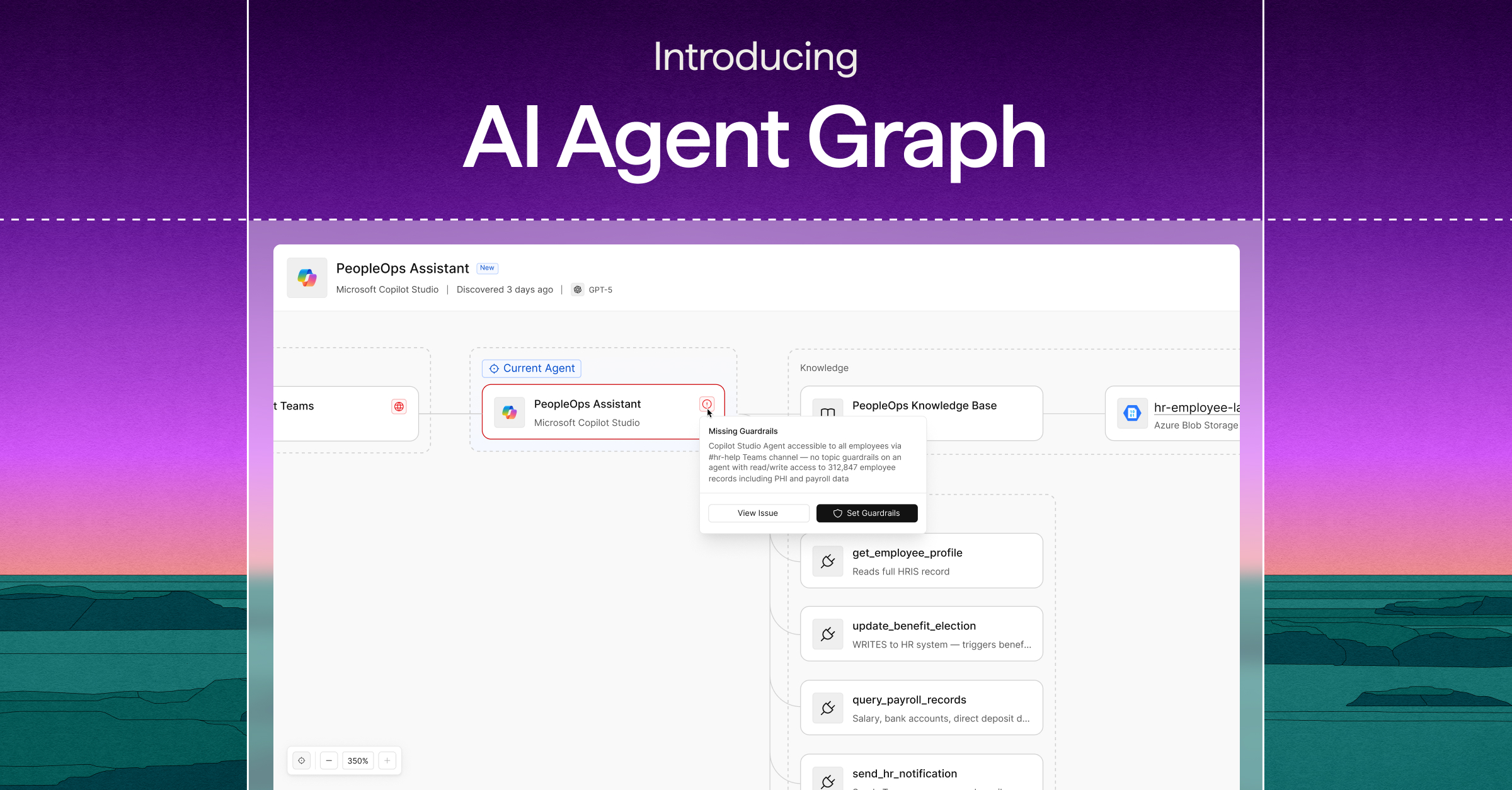

Cyera built AI Guardian to close the visibility gap and give enterprises the control they need to adopt AI with confidence. AI Guardian gives you full visibility into every type of AI in use across your organization. It doesn't stop at a simple inventory. It delivers real business insights that show exactly who is using AI, what datastores those tools access, and whether the tool is sanctioned or Shadow AI.

And it goes beyond just flagging risk. AI Guardian surfaces both the risks and the opportunities tied to AI usage. Security teams get the context they need to enforce the right policies. Business leaders get the insight to double down on the right initiatives.

The bottom line: you don't have to choose between blocking AI and ignoring the risks. You can use AI tools securely.

Why Data Security Expertise Makes Cyera the Leader in AI Protection

AI security starts with data security. Optimized:Why Data Security Expertise Makes Cyera the Leader in AI Protection

Every AI model, agent, and workflow is only as secure as the data it touches. Cyera's platform discovers, classifies, and protects data everywhere it lives, across cloud environments, SaaS applications, and AI use cases.

AI Guardian extends that foundation into the AI layer, giving organizations:

- Comprehensive AI discovery across public, embedded, and homegrown models

- Deep data context that connects AI usage to sensitive data flows

- Actionable intelligence that turns security from a blocker into a strategic enabler

The enterprises that move fastest on AI security will be the ones leading the next wave of innovation. The ones that wait will face growing risk, compliance gaps, and competitive disadvantage.

Ready to see what's happening with AI inside your organization? Book a demo or read the AI Guardian white paper "Securing AI Starts with Data Security" to learn how leading enterprises are adopting AI safely at scale.

AI Visibility and Security: Frequently Asked Questions

Q.) What is AI visibility and why does it matter for security?

A.) AI visibility means having complete knowledge of which AI tools are being used in your organization, who is using them, and what data they access. It matters because you cannot secure what you cannot see - without visibility, sensitive data flows into unknown AI systems, creating security vulnerabilities and compliance risks.

Q.) How do you detect shadow AI in an enterprise environment?

A.) Shadow AI detection requires monitoring network traffic, application usage, and data flows across your entire IT infrastructure. AI Guardian automatically discovers public AI tools like ChatGPT, embedded platforms like Copilot, and homegrown models running on cloud services, providing a unified view of sanctioned and unsanctioned AI usage.

Q.) What types of data are most at risk from AI tools?

A.) Customer personally identifiable information (PII), source code, financial forecasts, and regulated health records are the most vulnerable. These data types often get copied into AI prompts or uploaded to AI platforms without proper security controls, potentially exposing sensitive information to unauthorized access or storage.

Q.) How does AI Guardian differ from traditional security monitoring tools?

A.) AI Guardian specifically focuses on AI discovery and data context, going beyond simple network monitoring. It identifies AI usage patterns, maps data flows to specific AI tools, and provides business intelligence about AI adoption trends, enabling security teams to make informed policy decisions rather than just blocking access.

Q.) Can you secure AI without blocking employee productivity?

A.) Yes, the key is visibility-based security rather than blanket restrictions. By understanding which AI tools employees use and what data they access, security teams can implement targeted policies that allow safe AI usage while protecting sensitive information. This approach enables innovation while maintaining security controls.

.svg)